Navigating the social and economic implications of AI

“Few people in the world know better than I do what it’s like to have your life’s work threatened by a machine.”

Garry Kasparov, the first world chess champion to lose to a machine

The growing hype around Artifical Intelligence (AI) in 2025 was so strong that it probably served to make me more of a sceptic overall. However, as a chess player, Kasparov’s words struck a chord and I began to see the real world social and economic implications of AI unfolding across our labour markets.

In this blog I’ll discuss why my overall position is that AI will deliver productivity gains, and society will benefit. However, it isn’t yet clear whether those gains will be marginal, similar to the introduction of the internet, or extreme, leading to large scale disruptions of industries and labour markets.

In the New Year I resolved to take this seriously and think others in the social policy community should too. Even a small change in hiring practices will have significant economic and social implications.

The developers of these technologies initially outsourced the social implications, and while they are beginning to think about these issues now, I think the rest of us need to step up to reflect on what is fundamentally a critical social policy issue.

“We are going to have to get comfortable with a smaller and smaller number of people creating more and more of the wealth. Even though this leads to more total wealth, it skews it toward fewer people. We’ll be faced with a new idle class. The obvious conclusion is that the government will just have to give these people money.”

OpenAI’s Sam Altman

The 2030 challenge: insights from The Windfall Trust

Left to right: Deven Ghelani, Policy in Practice, Adrian Brown, CEO of the Windfall Trust, Kanishka Narayan, Minister for AI and Online Safety at a Windfall Trust event exploring the impact of AI on society

I was recently invited to explore these themes with the Windfall Trust. Founded last year as a policy accelerator, the Trust moves beyond traditional think tank analysis to prepare society for the disruption of transformative AI. Their work considers the extreme ‘windfall’ example where advanced AI generates enormous wealth by taking over a whole range of tasks currently carried out by humans.

The Windfall scenarios shown in the graphic below focus on the year 2030, less than four years away.

AI labs expect impacts we can see this year to accelerate

Four years sounds like a ludicrously short timeframe for seismic shifts in the economy to manifest but for the AI engineers I spoke with at the event, the 2030 scenario wasn’t ambitious enough.

Anthropic, DeepMind and OpenAI, who were all at the Windfall Trust’s event, argued that we are already past the tipping point. For some time, they have viewed coding as a solved problem and expect to see the impact this year, with other cognitive tasks to follow closely behind.

They argue that the only real limits to AI capability are the level of computational power (datacentres) available and data to train it on. Both are seeing substantial investment, totalling half a trillion dollars this year alone, with the availability of datacentre capacity seemingly the key constraint.

By way of example, a few days before the event, we saw Anthoropic’s AI legal tool wipe billions of pounds off legal, data and software shares, and (which later largely recovered) and for an example close to home I saw that Tom Loosemore, partner at Public Digital, built a benefit checker over a weekend using about £200 of Replit credits.

I was struck by their views about what happens next.

Cognitive work will be followed by robotics, with impacts on manufacturing and then onto healthcare, with the prospect of longer lives, neural implants, and whatever comes with that. I was disappointed to hear from an engineer at DeepMind that fusion energy is still a long way off, however.

This leads to a sobering perspective shared by philosopher Nick Bostrum:

“The window in which humans still actively compete against intelligent machines is very small and nearly insignificant compared to the machine supremacy that will inevitably follow.”

Nick Bostrum, philosopher

You don’t have to believe in AGI to take AI seriously but the engineers building them are taking these extreme scenarios seriously, as shown by their investment in research teams considering what comes after artificial general intelligence (AGI).

What feels more important to most of us though is that we are already living in an AI enabled world, and AI doesn’t have to take over everything to still have a big impact on the labour market and how we work.

Anticipating the social and economic implications of AI

Taking the Windfall 2030 scenario seriously, we see three impacts to consider for social policy:

1. Growth: AI will improve productivity but the gains won’t be shared equally. Those winning today, the better off and well established, will win more in the future, leading to greater inequality. Nations will win or lose too, and the UK, which is well placed to take advantage of the AI windfall, can’t take this position for granted

2. Jobs: While AI will drive growth, it won’t necessarily drive employment. We’ll see the impact on those typically hardest hit when job growth slows, typically disabled people, carers, older workers and young people. Why hire and train an 18-24 year old when AI does the work at a fraction of the cost? We will see a growing premium for the highest skilled and most experienced workers, but what happens to people whose jobs are replaced by AI?

3. Infrastructure: Our physical infrastructure will become more important. Underinvestment in housing and energy already limits our growth and will continue to drive greater inequality until resolved; data centre capacity is already putting pressure on the energy grid, impacting on community development. Equally important is our social infrastructure. How well we regulate AI will determine its adoption, the sharing of its gains and our ability to limit its harms across different sectors of society

The imperative for action: a three pillar strategy

While the event suggested a range of ideas for specific action, a clear consensus emerged for action in three areas: tax, education and social security.

1. Tax: We need a new regime to capture the gains from AI

We couldn’t agree on the best way to raise taxes, although we favoured consumption, land and inheritance taxes over distortionary taxes on work. The Mirlees Review suggests this is a solved problem, but it will need political will and international coordination

2. Education: We will need to see a big investment in core skills and the early years

Intrinsic motivation and character will become the core drivers of individual and societal success, shaped by children’s experiences in the early years. People like James Heckman have called for a shift in investment from higher education to preschool and primary, to give young people the foundation on which to learn and adapt to an unpredictable and accelerating economy. Employers and governments will also need to actively look for, support and help develop these skills to help people reach their AI enabled potential

3. Social security: Investment in communities and third spaces

Family and clan networks will become even bigger drivers of success, and investment in communities and wider in-person social networks can help to give people access to opportunities. We will look to ‘hand and heart’ sectors, such as DIY and caring for new jobs, and the value of these must be better recognised.

The UK can be a global leader

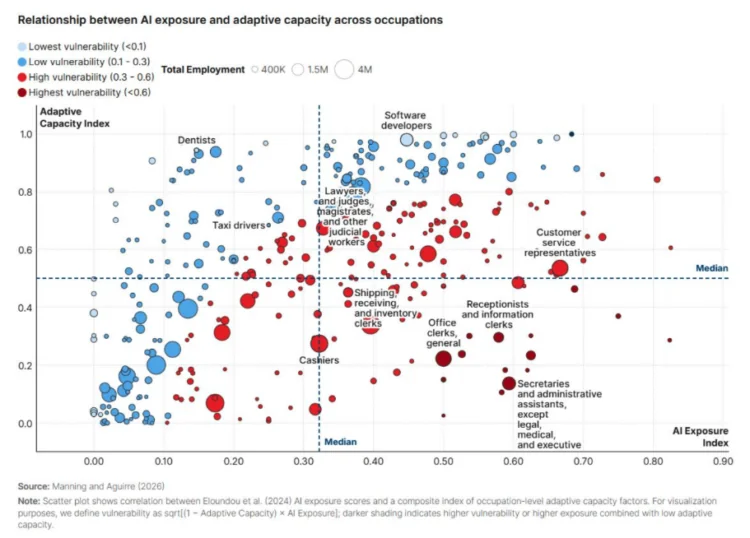

The chart below shows AI risk by occupation. We are already seeing disruption in some sectors, and will need to be ready as the scope of AI widens.

I am an optimist and believe that the UK is well positioned to be a leader in this transition and is well placed to harness the benefits from AI. While a number of our key service sector industries will be disrupted, they stand to gain the most from AI-driven productivity efficiencies.

But we can’t take our leadership for granted. Speaking with Ministers, it’s clear that they want to see the UK accelerate the pace of adoption, both in government and across the economy, so we can capture the windfall.

And for those that stand to lose out, at least in the short term, I argued that the UK social security system is well placed to support this transition.

Universal Credit is a proven mechanism that shows we can deliver and respond to extreme social and economic challenges, as shown by its use during COVID. In our 2025 report, Putting the Universal into Universal Credit, Policy in Practice argued that Universal Credit can be made even more universal. We recommended lifting or raising the savings limit in Universal Credit. This, alongside a few other tweaks, would immediately make Universal Credit the basis of a Universal Basic Income.

When we think about the wider impacts of employment in providing purpose and structure, a broader form of Universal Support, which the Centre for Social Justice called for alongside Universal Credit to help people build confidence while looking for work, can be part of the solution in helping people to retrain and find purpose if their sector is impacted.

If we see serious disruption in the sectors shown as red in the graph above, we will need to be ready. Universal Credit in this form could be exported as a model for other countries to consider, as it would protect middle earners from the impact of AI, while retaining the incentive to work. More on this to come.

Policy in Practice is using AI safely and securely today to strengthen the social security net

Policy in Practice is experimenting more heavily with AI than ever, treating it as a tool to be guided safely and securely by humans. We think it can bring the future closer, but we need to shape it.

My observations on using AI at Policy in Practice:

- It enables employees to do more. All employees have access to Gemini, and all engineers have access to Claude Code and Copilot, as do a growing number of non-engineers.

- Attention and understanding matter more than ever. People need to verify what AI is doing, and show they understand it, otherwise they risk not adding anything of real value. This is a people management issue that’s worth addressing early

- Security matters, and AI isn’t there yet. For a company that puts the security of our clients data as our top priority, AI written code leaves too many holes. Our senior engineers review all the code we write and have flagged reliance on AI written code as a risk others aren’t taking seriously

- It’s great value for now. The AI we use today is heavily subsidised and organisations building dependencies on it need to bear that in mind

This is the start of a conversation

It was great to think through the scenarios with brilliant social scientists and engineers at the Windfall Trust. I learned a huge amount, and left with a better understanding of what AI can do today, what it’s likely to be able to do in the future, and a greater sense of agency in our ability to shape that future.

We are pleased to be playing a small part by streamlining access to social security, using technology to strengthen the social safety net and widen the access to and use of public sector data across government.

For our clients and partners who want to learn more about the benefits and risks of AI, want to understand how we are using it and who want to share what they are up to, drop me a line at deven@policyinpractice.co.uk.